Caching improves performance.

Applications reduce repeated computation by storing previously generated results. Databases handle fewer queries. Response times decrease. Consequently, caching is often implemented early in optimization efforts.

However, caching also changes system behavior.

At Wisegigs.eu, performance audits frequently reveal systems where aggressive caching improves speed temporarily but introduces reliability issues. Data becomes inconsistent. Updates fail to propagate. Debugging becomes significantly more complex.

These outcomes are predictable.

Caching trades computation cost for state management complexity.

Caching Is Often Treated as a Universal Solution

Performance problems trigger immediate responses.

When systems slow down, teams frequently introduce caching layers without fully diagnosing bottlenecks. Because caching produces visible improvements, it becomes a default optimization strategy.

However, caching does not eliminate underlying inefficiencies.

Slow queries remain slow.

Inefficient logic remains inefficient.

Instead, caching hides these problems temporarily.

Consequently, systems may appear fast while structural issues persist.

Caching Changes System Behavior

Caching introduces state.

Instead of computing results dynamically, systems return stored responses. This shift alters how data flows through the application.

Requests no longer reflect real-time data.

Responses depend on cache state, expiration rules, and invalidation logic. Therefore, application behavior becomes dependent on caching configuration rather than pure execution logic.

This transformation introduces new failure modes.

Stale Data Introduces Consistency Problems

Cached data may become outdated.

When underlying data changes, cached responses may not update immediately. As a result, users may see inconsistent information across requests.

Common scenarios include:

outdated product pricing

delayed content updates

inconsistent user session data

mismatched inventory status

These inconsistencies reduce system reliability.

Users expect accuracy, not just speed.

Cache Invalidation Becomes a Primary Risk

Cache invalidation determines correctness.

When data changes, the system must decide when and how to refresh cached values. Incorrect invalidation strategies lead to stale data or unnecessary recomputation.

This problem is widely recognized.

Cache invalidation is often described as one of the hardest problems in computer science.

Effective invalidation strategies require:

precise dependency tracking

event-driven cache updates

controlled expiration policies

clear data ownership boundaries

Without these mechanisms, caching introduces unpredictable behavior.

Redis documentation discusses cache consistency considerations:

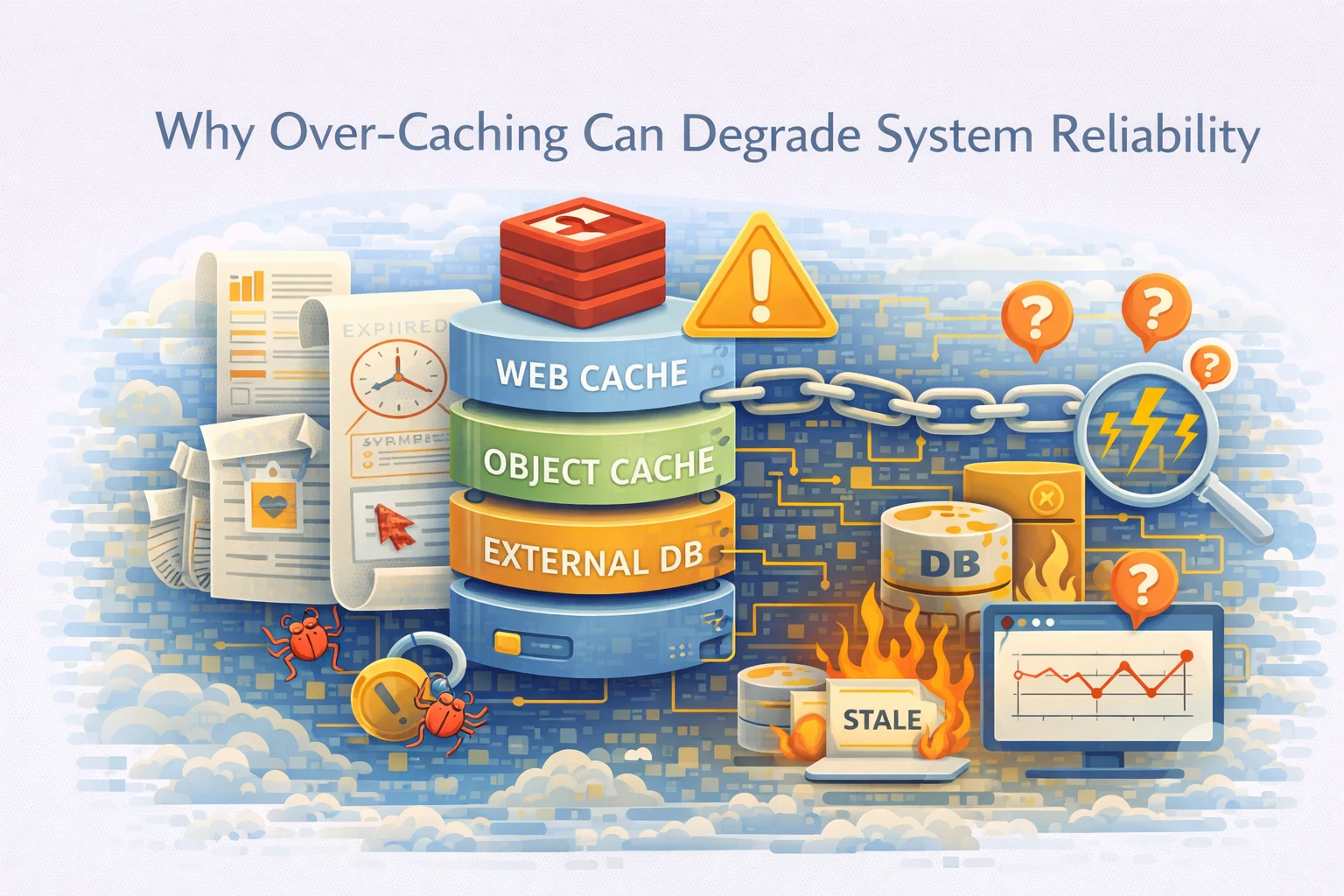

Multiple Cache Layers Increase Complexity

Modern systems often use multiple caching layers.

These may include:

application-level caching

object caching (Redis or Memcached)

full-page caching

CDN edge caching

Each layer operates independently.

Consequently, data may exist in multiple states simultaneously. Synchronizing these layers becomes complex.

For example:

CDN cache may serve outdated content

object cache may conflict with database state

application cache may override recent updates

This layered architecture increases coordination overhead.

Debugging Becomes More Difficult

Caching complicates debugging.

When issues arise, developers must determine whether problems originate from application logic or cache behavior. Reproducing bugs becomes difficult because cached responses may differ across environments.

Typical debugging challenges include:

inconsistent test results

difficulty reproducing stale data issues

hidden dependencies between cache layers

environment-specific behavior

These factors increase troubleshooting time.

Systems become harder to reason about.

Performance Gains Can Be Misleading

Caching improves perceived performance.

However, improvements may mask underlying inefficiencies. Systems appear fast under normal conditions but fail under specific scenarios.

For example:

cache misses trigger slow database queries

high traffic invalidates cache frequently

cache warm-up delays affect initial requests

In these cases, performance becomes inconsistent.

Average response time improves.

Worst-case latency remains problematic.

Therefore, caching may hide rather than solve performance issues.

Observability Is Required for Safe Caching

Caching requires visibility.

Without observability, teams cannot evaluate cache effectiveness or detect consistency issues. Monitoring systems must track both performance and correctness.

Key metrics include:

cache hit rate

cache miss latency

invalidation frequency

data freshness indicators

error rates related to stale data

These signals help identify whether caching improves or degrades system behavior.

At Wisegigs.eu, caching strategies always include monitoring and validation mechanisms.

Visibility ensures reliability.

What Reliable Caching Strategies Prioritize

Effective caching requires discipline.

Reliable systems typically prioritize:

identifying bottlenecks before caching

limiting caching scope to appropriate data

designing clear invalidation strategies

minimizing overlapping cache layers

monitoring cache behavior continuously

validating data consistency regularly

These practices balance performance improvements with system reliability.

Caching should support architecture, not replace it.

Conclusion

Caching improves performance.

However, excessive caching introduces complexity.

To recap:

caching does not remove underlying inefficiencies

it changes system behavior by introducing state

stale data creates consistency issues

invalidation becomes a critical challenge

multiple cache layers increase coordination complexity

debugging becomes more difficult

performance gains may hide deeper problems

observability is required for safe implementation

At Wisegigs.eu, reliable performance optimization begins with system analysis, controlled caching strategies, and continuous monitoring.

If your system feels fast but behaves inconsistently, over-caching may be the underlying cause.

Need help diagnosing caching or performance issues? Contact Wisegigs.eu