Scaling is widely associated with improvement.

When systems slow down or traffic increases, adding more resources feels like the logical response. Larger servers, additional instances, and expanded capacity appear to promise immediate relief. Because this approach seems intuitive, many teams treat scaling as a universal solution.

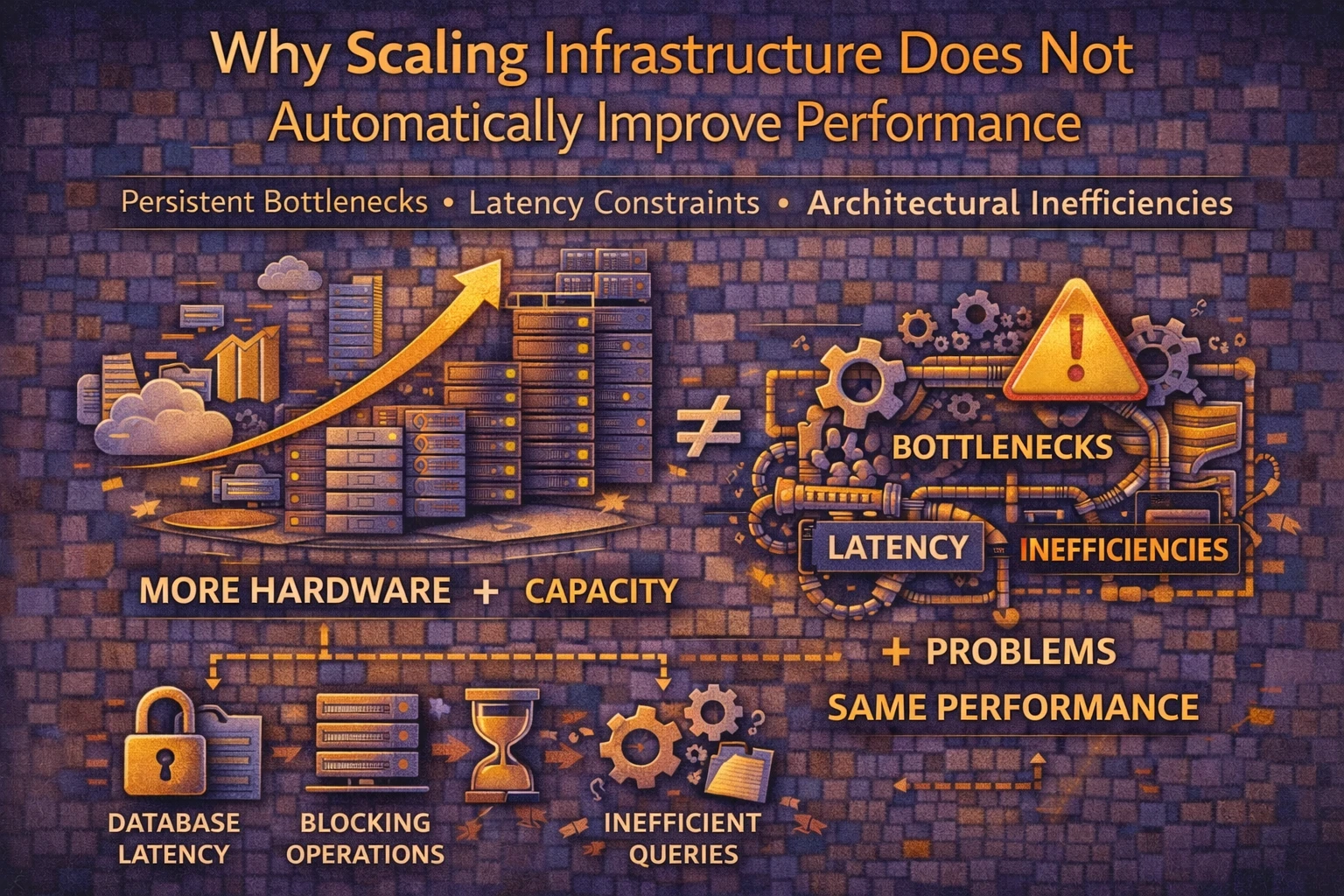

However, performance rarely improves through capacity alone.

At Wisegigs.eu, numerous infrastructure investigations reveal environments where scaling operations increase cost without delivering meaningful speed or stability gains. Despite expanded resources, latency, inconsistency, and throughput constraints often persist.

This outcome is not surprising.

Scaling amplifies system behavior rather than correcting architectural weaknesses.

Performance Problems Are Frequently Structural

Resource shortages represent only one failure mode.

In many cases, performance limitations originate from inefficient queries, blocking operations, excessive dependencies, or poorly distributed workloads. Increasing hardware capacity does not remove these constraints.

Instead, underlying inefficiencies remain intact.

Bottlenecks follow execution paths, not server size.

Google’s performance guidance consistently emphasizes identifying root causes before optimization:

https://web.dev/

Latency Behaves Differently From Capacity

Scaling primarily affects throughput.

More CPU cores or additional nodes increase potential workload handling. Latency, by contrast, depends on execution efficiency, coordination overhead, and dependency resolution.

Consequently, a system may handle more requests while remaining slow per request.

Capacity growth does not guarantee response time improvement.

Horizontal Scaling Introduces Coordination Costs

Distributed systems require synchronization.

Load balancers, shared caches, session handling, and database coordination introduce new operational layers. Although these mechanisms enable growth, they also create additional latency sources.

Communication overhead becomes unavoidable.

More components often mean more delays.

Cloud architecture references describe these trade-offs extensively:

https://aws.amazon.com/architecture/

Inefficiencies Expand With Traffic

Scaling increases workload volume.

If request processing is inefficient, higher concurrency magnifies execution cost. Queries execute more frequently. Background tasks compete for resources. Contention effects intensify.

As a result, scaling may expose weaknesses that remained invisible under lighter load.

Growth accelerates failure visibility.

Databases Often Remain the Dominant Constraint

Most applications depend heavily on databases.

While stateless layers scale horizontally with relative ease, data systems face stricter consistency and coordination requirements. Consequently, database latency frequently limits overall performance even after scaling other components.

Additional servers cannot compensate for slow queries.

Execution time accumulates at the data layer.

MySQL performance documentation highlights these limitations:

https://dev.mysql.com/doc/

Caching Does Not Replace Architectural Discipline

Caching reduces repeated computation.

However, scaling without understanding cache behavior often produces inconsistent results. Cache invalidation patterns, memory limits, and object lifecycles significantly influence effectiveness.

Improper caching strategies may even increase instability.

Predictability requires deliberate design.

Cloudflare’s performance resources discuss these dynamics:

https://www.cloudflare.com/learning/performance/

Scaling Can Mask, Not Solve, Problems

Temporary improvements create false confidence.

Additional capacity may delay visible failure while leaving structural issues unresolved. Over time, complexity increases, diagnosis becomes harder, and corrective actions grow more expensive.

Symptoms recede briefly, then return.

Illusions of stability frequently precede larger incidents.

Resource Utilization Rarely Scales Linearly

Real workloads exhibit uneven distribution.

CPU usage, memory pressure, I/O operations, and network behavior rarely increase proportionally. Consequently, scaled environments may still suffer from hotspots, imbalances, or scheduling anomalies.

More hardware does not guarantee balanced execution.

Workload shape determines efficiency.

Why Scaling Feels Like a Safe Default

Scaling appears low risk.

It avoids deep system analysis, requires fewer architectural decisions, and provides immediate measurable changes. Because the action is visible and reversible, organizations often prefer it over structural optimization.

Convenience drives strategy.

Effectiveness is assumed.

What Reliable Performance Engineering Prioritizes

Stable systems treat scaling as a consequence, not a remedy.

Effective teams:

Identify bottlenecks before expanding capacity

Measure latency sources explicitly

Optimize queries and execution paths

Validate dependency behavior

Analyze coordination overhead

Revisit assumptions continuously

At Wisegigs.eu, scaling decisions follow diagnostic clarity rather than intuition.

Performance emerges from efficiency, not size.

Conclusion

Scaling increases resources.

It does not guarantee improved performance.

To recap:

Many bottlenecks are structural

Capacity differs from latency

Horizontal scaling introduces overhead

Inefficiencies magnify under load

Databases frequently dominate constraints

Caching requires disciplined design

Scaling may mask deeper issues

At Wisegigs.eu, sustainable performance improvements arise from understanding system behavior, removing inefficiencies, and treating scaling as part of a broader reliability strategy.

If scaling your infrastructure failed to improve performance, the real constraint may exist elsewhere.

Contact Wisegigs.eu