Default configurations are designed for accessibility.

Operating systems, control panels, and server software ship with baseline settings that prioritize compatibility and ease of deployment. Because these defaults reduce initial friction, many systems reach production without significant adjustments.

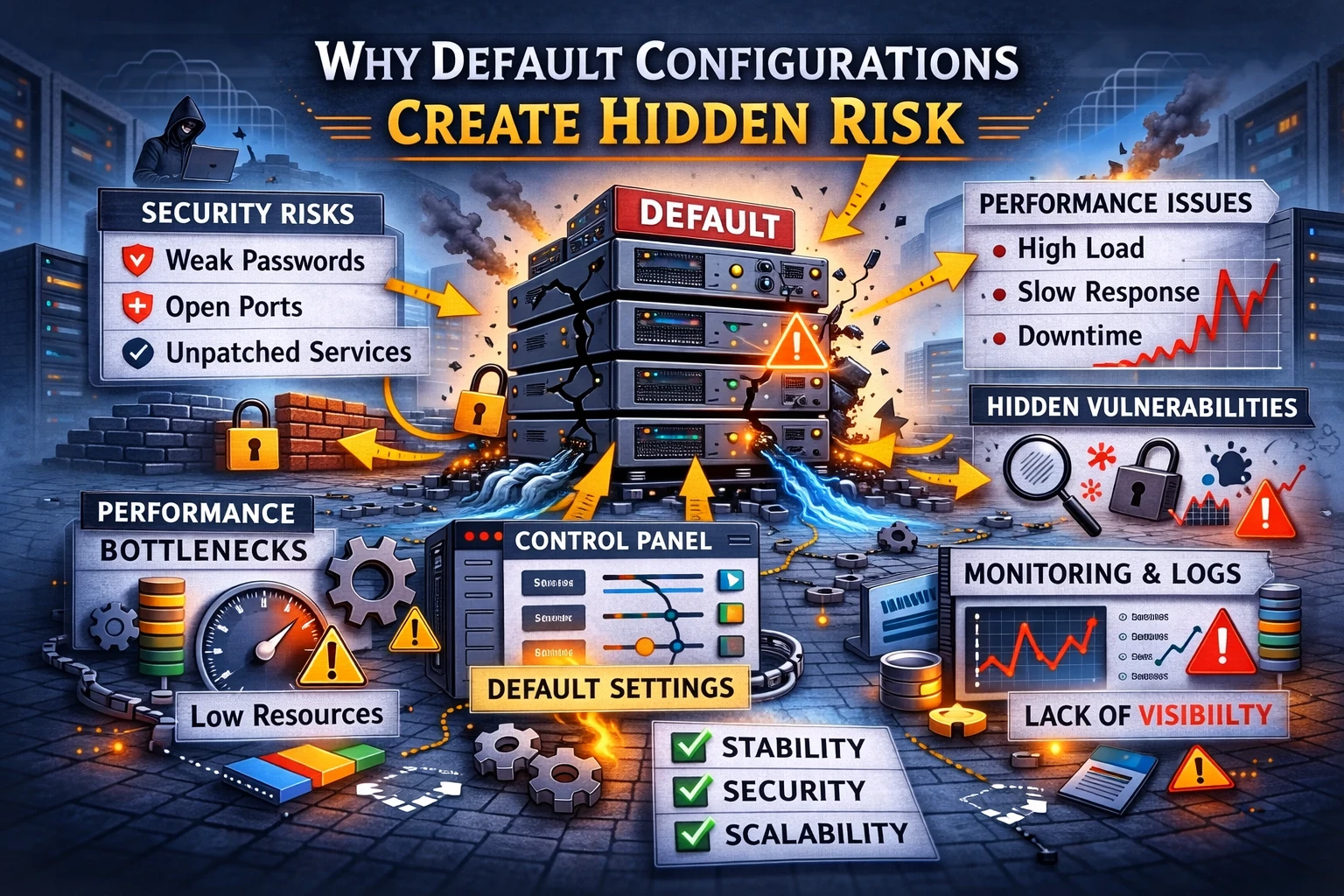

However, defaults are not optimized for real workloads.

At Wisegigs.eu, a substantial portion of infrastructure instability, security weaknesses, and performance anomalies can be traced back to unchanged baseline settings. While these systems often function correctly at launch, hidden constraints accumulate beneath the surface.

This article explains why default configurations introduce systemic risk, how convenience masks fragility, and why disciplined setup decisions matter more than most teams expect.

Defaults Optimize for General Use, Not Specific Needs

Baseline settings must support diverse environments.

Vendors cannot anticipate workload characteristics, traffic patterns, or threat models. Consequently, default values prioritize broad compatibility rather than targeted efficiency or resilience.

Production environments, by contrast, are highly specific.

Workload behavior, request distribution, and dependency interactions vary widely. Without deliberate tuning, generic settings frequently misalign with operational reality.

Functionality does not guarantee suitability.

Security Exposure Often Begins With Defaults

Initial configurations emphasize usability.

Services listen on predictable ports. Authentication rules remain permissive. Diagnostic interfaces may remain accessible. While these choices simplify onboarding, they also expand the attack surface.

Importantly, exposure does not require malicious intent.

Automated scanning, credential probing, and opportunistic abuse continuously target publicly reachable systems. Therefore, unchanged defaults often become the earliest vulnerability layer.

Security posture emerges from configuration discipline.

Authoritative hardening references consistently warn against relying on baseline settings alone:

https://ubuntu.com/security

https://www.nginx.com/resources/wiki/start/topics/tutorials/hardening/

Performance Constraints Remain Invisible at Low Load

Many inefficiencies hide during early operation.

Caching behavior, worker limits, connection handling, and memory allocation may appear adequate under minimal traffic. Because systems respond normally, defaults remain unquestioned.

Scaling alters this perception.

Increased concurrency exposes resource contention, scheduling delays, and throughput bottlenecks. Consequently, performance degradation may appear sudden despite originating from long-standing configuration mismatches.

Load reveals latent constraints.

Control Panels Encourage False Confidence

Server panels simplify management.

They abstract complexity, automate common tasks, and reduce manual intervention. While these tools improve accessibility, they also obscure underlying behavior.

Defaults often persist behind the interface.

Without deeper inspection, administrators may assume that panel-driven environments are inherently optimized. In practice, baseline values frequently remain intact.

Abstraction does not eliminate responsibility.

Resource Allocation Behaves Differently Than Expected

Default limits rarely reflect workload intensity.

Thread counts, connection caps, timeout values, and scheduling thresholds assume generalized conditions. Under variable traffic, these assumptions frequently fail.

As a result, systems may exhibit intermittent instability.

Symptoms include latency spikes, request queuing, and unpredictable response behavior. Because failures appear inconsistent, diagnosis becomes challenging.

Configuration logic shapes runtime behavior.

Defaults Accumulate Risk Gradually

Configuration weaknesses rarely produce immediate failure.

Instead, small inefficiencies compound over time. Dependency growth, traffic shifts, and feature expansion amplify their impact.

Eventually, minor misalignments create systemic fragility.

The absence of early incidents often reinforces complacency, which further delays corrective action.

Stability erodes silently.

Operational Complexity Increases Without Visibility

Defaults frequently prioritize minimal verbosity.

Logging levels, monitoring thresholds, and diagnostic output may be conservative. While this reduces noise, it also limits situational awareness.

When anomalies occur, insufficient visibility complicates root-cause analysis.

Detection delays increase incident duration.

Observability is a configuration decision.

Why Defaults Feel Safer Than They Are

Defaults imply vendor endorsement.

Because settings originate from software providers, teams often assume they represent best practices. In reality, defaults represent safe starting points rather than production-ready conclusions.

Context determines correctness.

Generic safety does not equal operational suitability.

What Disciplined Server Setup Prioritizes

Resilient systems evolve beyond baseline assumptions.

Effective teams:

Review service exposure explicitly

Adjust resource limits intentionally

Validate workload behavior under load

Harden authentication surfaces

Increase observability depth

Revisit configurations regularly

At Wisegigs.eu, server setup is treated as a reliability and security engineering activity rather than a deployment formality.

Defaults are beginnings, not decisions.

Conclusion

Default configurations simplify deployment.

They do not guarantee stability, performance, or security.

To recap:

Defaults optimize for general compatibility

Security exposure often starts at baseline settings

Performance constraints hide under low load

Control panels mask persistent defaults

Resource limits require contextual tuning

Risk accumulates gradually

Observability depends on configuration choices

At Wisegigs.eu, long-lived infrastructure stability emerges from deliberate configuration discipline rather than inherited baseline values.

If your server works but behaves unpredictably, unchanged defaults may be the hidden cause.

Contact Wisegigs.eu