Cache Distribution Defines Load Behavior

Application performance depends on how requests are absorbed.

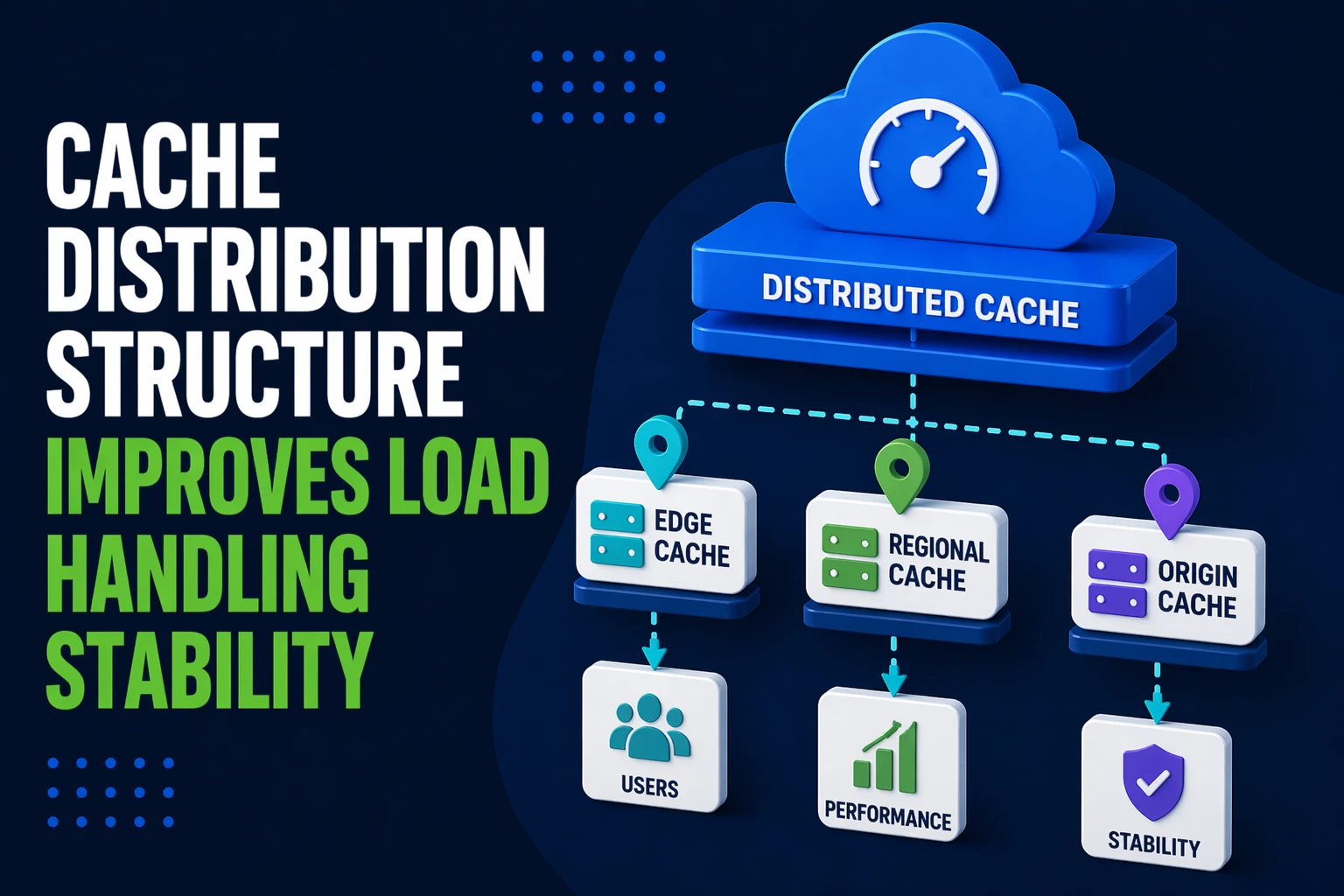

Centralized caching introduces bottlenecks. Consequently, origin servers receive burst traffic when cache misses increase.

Distributed cache layers spread request handling across multiple nodes. Therefore, load becomes predictable under traffic spikes.

Unbalanced distribution increases failure probability.

Structured distribution improves load stability.

Edge Caching Improves Request Absorption

Edge caching moves content closer to users.

Without edge layers, every request travels to the origin. As a result, latency increases and infrastructure load spikes.

Edge nodes absorb repeated requests at the network boundary. Consequently, origin systems handle fewer requests.

Common edge inconsistencies include:

- missing edge rules for static assets

- inconsistent cache TTLs across regions

- fragmented CDN configuration affecting hit rates

- uneven distribution of cached content

Structured edge caching improves request predictability.

Predictable absorption improves stability.

Cloudflare explains edge caching benefits for performance stability:

https://developers.cloudflare.com/cache/

Regional Cache Layers Stabilize Latency

Applications serving global users require geographic distribution.

Single-region caching introduces latency variability. Therefore, distant users experience slower responses.

Regional cache nodes reduce travel distance. Consequently, latency becomes more consistent.

Common regional inconsistencies include:

- unequal cache population across regions

- missing replication strategies

- inconsistent synchronization affecting freshness

- fragmented region routing logic

Structured regional caching improves latency predictability.

Predictable response times improve user experience stability.

Cache Segmentation Reduces Resource Contention

Caching different data types in a single layer introduces competition.

Shared cache pools increase eviction unpredictability. As a result, important data may be removed prematurely.

Segmented caches isolate workloads.

Examples include:

- static asset cache

- dynamic response cache

- database query cache

- session storage cache

Segmentation improves allocation predictability.

Predictable allocation improves system stability.

Consistent Invalidation Preserves Accuracy

Caching without invalidation control introduces stale data risk.

Inconsistent invalidation reduces trust in cached responses.

Structured invalidation ensures data accuracy.

Common inconsistencies include:

- manual cache clearing causing unpredictability

- missing invalidation triggers after updates

- inconsistent TTL strategies

- fragmented purge logic across layers

Structured invalidation improves reliability.

Predictable freshness improves stability.

Google web.dev explains cache freshness strategies:

https://web.dev/http-cache/

Routing Logic Influences Cache Efficiency

Cache distribution depends on how requests are routed.

Inefficient routing reduces cache hit rates.

Low hit rates increase origin dependency.

Common routing issues include:

- inconsistent URL normalization

- fragmented query parameter handling

- missing cache keys affecting reuse

- uneven load balancing across nodes

Structured routing improves cache efficiency.

Predictable routing improves load distribution.

Monitoring Validates Distribution Performance

Cache systems require continuous visibility.

Without monitoring, inefficiencies remain hidden.

Structured monitoring improves optimization.

Key indicators include:

- cache hit ratio

- origin request rate

- latency distribution

- regional performance variation

Unmonitored systems drift toward inefficiency.

Measured systems maintain stability.

What Reliable Cache Distribution Structures Prioritize

Stable performance depends on predictable request handling.

Reliable cache distribution systems typically prioritize:

- edge-level request absorption

- regional cache consistency

- segmented workload isolation

- structured invalidation logic

- optimized routing strategies

- continuous performance monitoring

These characteristics reduce infrastructure stress.

Reduced stress improves load handling stability.

At Wisegigs.eu, caching strategy focuses on distributing load predictably across layers to maintain consistent application performance.

Structured distribution improves long-term reliability.

Need help implementing distributed caching for stable performance under load?

Contact Wisegigs.eu