Infrastructure stability depends on detection timing accuracy.

Incidents rarely become critical immediately. Instead, failures evolve through progressive degradation patterns. Early signals often indicate abnormal system behavior before visible outages occur.

Alert logic influences detection timing.

When alert thresholds remain poorly defined, signals generate noise or remain undetected. Both conditions reduce response effectiveness.

At Wisegigs.eu, infrastructure monitoring audits frequently reveal escalation caused by poorly calibrated alerts rather than unexpected system failures. Signals exist, yet alert structure fails to communicate urgency accurately.

Signal clarity influences response speed.

Predictable alert logic improves incident containment.

Threshold Design Influences Signal Accuracy

Monitoring systems produce continuous metric streams.

Threshold logic defines when metric variation becomes actionable.

Incorrect thresholds generate misleading signals.

Common threshold design risks include:

overly sensitive triggers producing alert fatigue

overly tolerant thresholds delaying detection

static thresholds ignoring workload variability

unstructured thresholds lacking severity classification

Accurate thresholds improve signal interpretation reliability.

Reliable signals improve response decision accuracy.

Google SRE documentation explains how alert calibration improves operational stability:

https://sre.google/sre-book/monitoring-distributed-systems/

Calibrated thresholds improve incident detection precision.

Alert Noise Reduces Response Effectiveness

Frequent false alerts reduce attention sensitivity.

Repeated false positives reduce urgency perception.

Reduced urgency increases response delay probability.

Common alert noise sources include:

temporary resource spikes incorrectly interpreted as incidents

redundant alerts generated across multiple monitoring tools

poor aggregation logic producing duplicate notifications

misconfigured thresholds generating irrelevant warnings

Noise reduction improves signal trustworthiness.

Trusted signals improve response speed.

Reduced noise improves incident prioritization clarity.

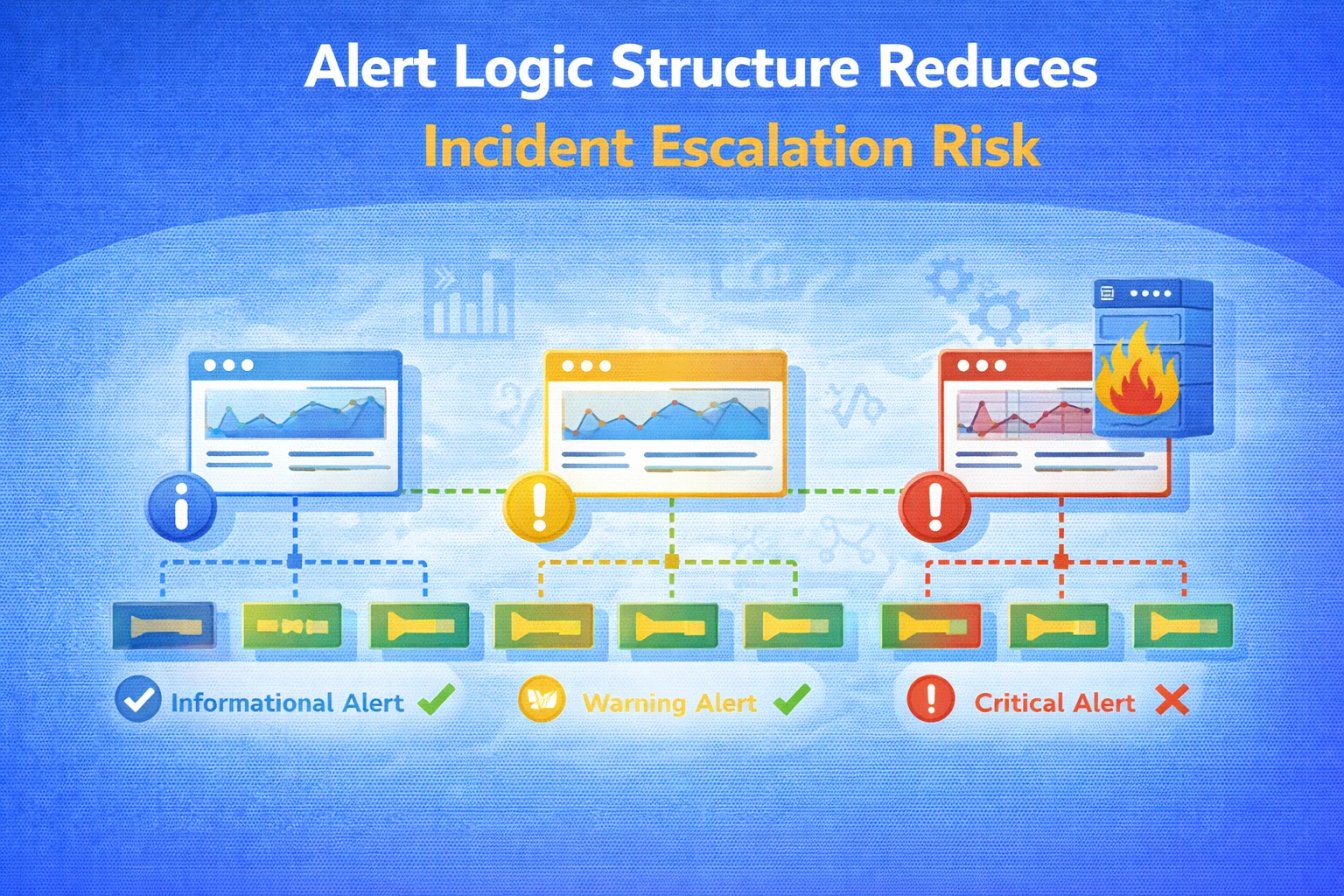

Severity Classification Improves Escalation Predictability

Incidents vary in operational impact magnitude.

Unclassified alerts introduce ambiguity regarding urgency level.

Severity levels define escalation boundaries.

Typical severity structure includes:

informational signals requiring observation only

warning signals indicating emerging instability

critical alerts requiring immediate response

emergency alerts indicating service disruption risk

Classification improves prioritization clarity.

Clear prioritization improves response consistency.

Structured severity logic improves escalation predictability.

Monitoring Coverage Influences Detection Reliability

Incomplete monitoring creates visibility gaps.

Gaps reduce anomaly detection probability.

Observable systems improve diagnostic confidence.

Common monitoring layers include:

infrastructure metrics measuring CPU and memory utilization

application performance metrics measuring response latency

database performance indicators measuring query execution duration

network metrics measuring throughput variability

error tracking signals measuring failure frequency

Coverage completeness improves anomaly detection accuracy.

Accurate detection improves response readiness.

Alert Timing Influences Incident Containment Scope

Early detection reduces impact propagation.

Delayed alerts increase affected component count.

Propagation increases recovery complexity.

Common timing risks include:

alert delays caused by long evaluation intervals

slow metric aggregation increasing response latency

high alert thresholds ignoring early warning signals

lack of predictive indicators detecting gradual degradation

Timely alerts improve containment effectiveness.

Early response reduces system disruption probability.

Reduced disruption improves availability stability.

Correlated Signals Improve Diagnostic Precision

Single metrics rarely describe complete system behavior.

Correlated signals improve interpretation accuracy.

Cross-metric analysis improves anomaly detection reliability.

Typical correlated signal patterns include:

increased CPU utilization combined with rising response latency

error rate increase combined with traffic variation

database latency increase combined with query volume changes

memory saturation combined with increased swap utilization

Correlation improves diagnostic clarity.

Clear diagnosis improves remediation speed.

Multi-signal visibility improves reliability predictability.

Alert Routing Improves Response Efficiency

Alert delivery pathways influence response timing.

Incorrect routing delays remediation.

Clear ownership boundaries improve accountability clarity.

Common routing risks include:

alerts delivered to inactive communication channels

unclear team ownership responsibility definitions

multiple escalation paths creating response ambiguity

lack of redundancy in notification systems

Structured routing improves response continuity.

Clear ownership improves accountability predictability.

Reliable routing improves remediation speed.

Observability Consistency Improves System Behavior Understanding

Observability includes metrics, logs, and traces.

Combined visibility improves system interpretation accuracy.

Consistent observability structure improves diagnostic continuity.

Common observability components include:

metrics describing system performance variation

logs describing event sequences

traces describing request lifecycle behavior

uptime monitoring indicating availability patterns

Integrated signals improve anomaly detection confidence.

Confidence improves response precision.

Consistent observability improves reliability predictability.

AWS observability documentation explains monitoring structure principles:

https://docs.aws.amazon.com/wellarchitected/latest/reliability-pillar/welcome.html

Integrated visibility improves operational stability.

What Reliable Alert Logic Prioritizes

Stable infrastructure monitoring depends on signal clarity.

Reliable alert logic structures typically prioritize:

accurate threshold calibration

minimal signal noise interference

clear severity classification structure

comprehensive monitoring coverage

timely signal evaluation intervals

correlated signal interpretation logic

predictable alert routing structure

These characteristics improve incident detection accuracy.

Accurate detection improves containment effectiveness.

At Wisegigs.eu, monitoring architecture focuses on reducing ambiguity affecting alert interpretation reliability.

Signal clarity improves operational predictability.

Structured alert logic improves long-term reliability stability.

Need help improving monitoring logic to reduce incident escalation risk?

Contact Wisegigs.eu